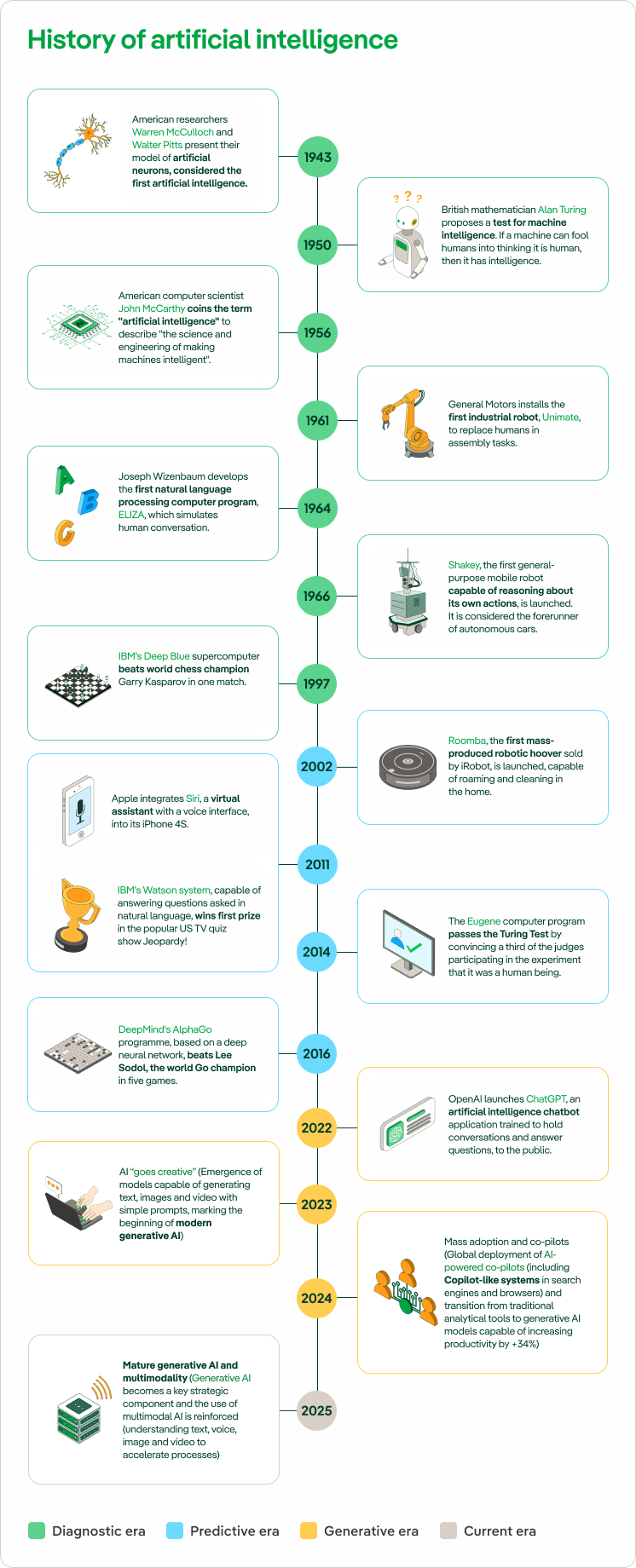

History of artificial intelligence

Artificial intelligence: birth, applications and future trends

While it might sound like something from a futuristic film, artificial intelligence (AI) is a field that is deeply embedded in our everyday lives. The idea of machines capable of imitating human intelligence is more than a century old, but it has advanced significantly in recent years. Today, AI is present in many aspects of our daily lives, often without us noticing. Discover what artificial intelligence is, what it is used for, what its risks and challenges are and what we expect from it in the future.

Artificial intelligence has emerged as a revolutionary tool with applications that are increasingly present in our everyday lives. From the creation of robots with human-like capabilities to virtual assistants that respond to voice commands on mobile devices and smart speakers, AI has captured the attention of global companies in the Information and Communications Technology (ICT) sector. Considered by many to be the Fourth Technological Revolution, following the expansion of mobile platforms and cloud computing, AI is driving profound transformation. However, its development is the result of a long process of scientific and technological advances that date back several decades.

Definition and Origins of Artificial Intelligence

When we speak of "intelligence" in a technological context we often refer to the ability of a system to use available information, learn from it, make decisions and adapt to new situations. It implies an ability to solve problems efficiently, according to existing circumstances and constraints. The term "artificial" means that the intelligence in question is not inherent in living beings, but is created through the programming and design of computer systems.

As a result, the concept of "artificial intelligence" (AI) refers to the simulation of human intelligence processes by machines and software. These systems are developed to perform tasks that, if performed by humans, would require the use of intelligence, such as learning, decision-making, pattern recognition and problem solving. For example, managing huge amounts of statistical data, detecting trends and making recommendations based on them, or even carrying them out.

Today, AI is not dedicated to creating entirely new knowledge, but rather focuses on collecting, processing and analysing data to maximise efficiency in decision-making. The three fundamental pillars on which AI is based are:

-

Data. This is the information that is collected and organised so that the system can automate tasks. It can include numbers, text, images, sounds and other types of data.

-

Hardware. This refers to the computing infrastructure that enables the processing of large volumes of data quickly and accurately, allowing software to run efficiently.

-

Software. This consists of algorithms and mathematical models that train systems to recognise patterns within data, learn from them and generate results that optimise processes.

But what are AI algorithms? This is the name given to the rules that provide the instructions for the machine. The main AI algorithms are those that use logic, based on the rational principles of human thought, and those that combine logic or intuition (deep learning), which use the way people's brains work to make the machine learn as they would.

How was artificial intelligence born?

The idea of creating machines that mimic human intelligence was present even in ancient times, with myths and legends about automatons and thinking machines. However, it was not until the mid-20th century that their true potential was investigated, after the first electronic computers were developed.

In 1943 Warren McCulloch and Walter Pitts presented their model of artificial neurons, considered the first artificial intelligence, even though the term did not yet exist. Later, in 1950, the British mathematician Alan Turing published an article entitled "Computing machinery and intelligence" in the magazine Mind where he asked the question: Can machines think? He proposed an experiment that came to be known as the Turing Test, which, according to the author, would make it possible to determine whether a machine could have intelligent behaviour similar to or indistinguishable from that of a human being.

John McCarthy coined the term "artificial intelligence" in 1956 and drove the development of the first AI programming language, LISP, in the 1960s. Early AI systems were rule-centric, which led to the development of more complex systems in the 1970s and 1980s, along with a boost in funding. Now, AI is experiencing a renaissance thanks to advances in algorithms, hardware and machine learning techniques.

As early as the 1990s, advances in computing power and the availability of large amounts of data enabled researchers to evolve learning algorithms and lay the foundations for today's AI. In recent years, this technology has seen exponential growth, driven in large part by the development of deep learning, which harnesses layered artificial neural networks to process and interpret complex data structures. This development has significantly transformed various AI applications, such as image and voice recognition, natural language processing (NLP) and autonomous systems, including self-driving vehicles and drones.

Key milestones in AI: in 1943, the first model of artificial neurons was created; in 1997, Deep Blue defeated the chess champion; in 2022, ChatGPT brought generative AI into human conversation; and by 2025, AI is already mature and multimodal, capable of working with text, voice, images and video.

Functions and purposes of artificial intelligence

Artificial intelligence plays a key role in digital and sustainable transformation in various sectors. Not only does it create a favourable climate for the development of an increasingly advanced digital landscape, but it is also one of the most impactful sustainable technologies: it enables organisations or companies to reduce the number of teams, resources or materials. It offers higher productivity with less, which guarantees a digital and sustainable basis for any company.

AI has practical applications in a wide variety of sectors, driving efficiency, innovation and decision making. Some of these areas are:

Current uses of artificial intelligence (AI) in the energy sector

Artificial intelligence is transforming the energy sector by optimising resource management, improving efficiency and reducing costs. Some of the main current uses of AI include:

- Demand forecasting: AI makes it possible to anticipate patterns in energy consumption, adjusting production and distribution more precisely.

- AI for asset management: It facilitates the monitoring and maintenance of assets, optimising their lifespan and reducing operating costs through predictive analysis.

- AI for efficiency in smart grids: AI optimises the flow of energy in smart grids, minimising losses and improving service quality for users.

- Use of advanced analytics in networks: This enables in-depth real-time data analysis to improve the management of electrical networks, anticipating potential failures and improving service reliability.

- Predictive renewable models (wind and solar): AI uses meteorological and generation data to predict energy production from renewable sources, allowing them to be integrated more efficiently into the network.

- Predictive maintenance: Through predictive algorithms, AI can identify potential failures in equipment and systems, enabling preventive maintenance that reduces downtime and costs.

Risks and challenges associated with artificial intelligence

Advances in artificial intelligence have led to the transformation of various areas and sectors, but have also raised concerns about possible risks or challenges that may arise in its development. Here are some examples:

Looking to the future: trends and projections for AI

The ethics of AI will remain a central concern, focusing on ensuring that systems are fair, equitable and respect users’ privacy. In this context, explainable AI (XAI) is becoming increasingly important to improve transparency and trust in automated systems.

As AI evolves, it is becoming increasingly specialised in areas such as personalised healthcare, with treatments tailored to individual needs based on genetic data, and education, where more inclusive and adaptive learning tools will be developed.

The convergence of AI with emerging technologies such as quantum computing, which promises to improve the speed and capacity of data processing, and advanced robotics, will enable the development of more effective autonomous systems in areas such as logistics and healthcare. What’s more, generative artificial intelligence, which includes tools such as advanced language models, is beginning to revolutionise sectors such as content creation, programming and creative design.

As artificial intelligence continues to expand, it is essential to establish global regulatory frameworks to ensure its ethical and responsible use, protecting both consumers and companies. AI must be integrated in a way that maximises its benefits and minimises its risks, particularly in terms of privacy, algorithmic bias and sustainability.